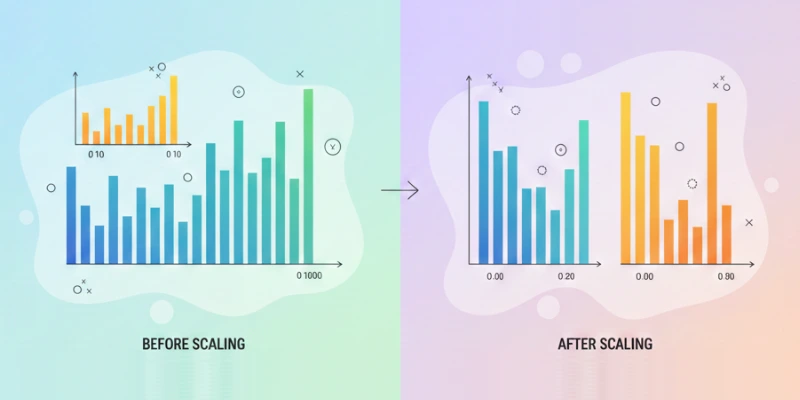

Feature scaling is one of the most important steps in the data preprocessing phase of a data science project. It entails modifying the range of variables to ensure that every feature has an equal impact on the analysis. Without proper scaling, certain features with larger values may dominate the results, leading to inaccurate or biased models. To learn these essential techniques in depth, you can enroll in a Data Science Course in Mumbai at FITA Academy and gain hands-on experience with real-world datasets.

Understanding Feature Scaling

In a dataset, different features often have different units or ranges. For example, one feature may represent age with values from 0 to 100, while another represents income in thousands or millions. If these features are used directly in machine learning models, the model may prioritize the feature with larger numerical values. Feature scaling ensures that all features are treated fairly and can improve the performance of algorithms that rely on distance calculations or gradients.

Methods of Feature Scaling

There are multiple methods to scale features. Min-max scaling adjusts the values of a feature to fit within a specific range, often from 0 to 1. Standardization transforms features so that they have a mean of zero and a standard deviation of one. Choosing the right method depends on the type of data and the algorithm being used.

Standardization is often preferred for algorithms that assume normally distributed data, while min-max scaling works well when the data does not follow a normal distribution. To gain practical knowledge of these techniques, consider joining a Data Science Course in Kolkata and learn from experienced instructors with hands-on projects.

Why Feature Scaling Matters

Feature scaling is crucial for several reasons. Many machine learning algorithms, including k-nearest neighbors, support vector machines, and gradient descent-based methods, are sensitive to the scale of features. Without scaling, these algorithms may converge slowly or produce suboptimal results. Additionally, feature scaling helps prevent bias toward features with larger values and ensures that each feature has a proportional impact on model predictions. This results in models that are more precise and trustworthy.

Impact on Model Performance

Proper feature scaling can significantly improve model performance. It helps models converge faster during training and reduces the risk of numerical instability. For example, gradient descent benefits from scaled features because the algorithm moves efficiently toward the minimum of the cost function. Scaling also enhances interpretability in certain models by making feature contributions comparable, helping to identify the most influential factors. To master these techniques and build strong practical skills, you can join a Data Science Course in Delhi and learn from industry experts.

Best Practices in Feature Scaling

When applying feature scaling, it is important to fit the scaling parameters on the training data only. Applying scaling based on the entire dataset can lead to data leakage and biased evaluation results. Additionally, always scale test or validation data using the same parameters obtained from the training data. This ensures consistency and prevents introducing errors during model evaluation.

Feature scaling is a fundamental part of preparing data for machine learning. It ensures that all features contribute equally to model training, improves algorithm performance, and enhances interpretability. Ignoring this step can lead to poor model accuracy and biased results. To gain a deep understanding of feature scaling and other essential data science techniques, consider enrolling in a Data Science Course in Jaipur and learn from experienced instructors with practical, hands-on projects.

Also check: How Companies Use Data to Make Decisions?